10.4 Reducing Evaporative Losses

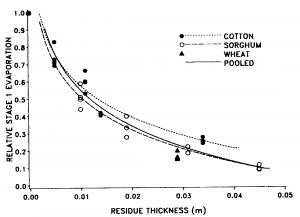

Evaporation from the soil can consume a substantial portion of the available water in cropping systems, and farmers have often sought methods to reduce evaporative losses. One effective method to conserve water during the first stage of evaporation is to keep the soil covered with crop residues. Crop residues on the surface reduce the amount of solar radiation reaching the surface and can reduce the rate of water vapor transport away from the surface, both of which lower the evaporative demand. The data in Fig. 10‑5 illustrate how cotton, sorghum, and wheat residues have similar beneficial effects of reducing stage 1 evaporation rate when present in equivalent thicknesses [6]. The water conserving effects of crop residue may be offset, however, because lowering stage 1 evaporation rates may lead to prolonging stage 1.

In irrigated cropping systems and landscapes, evaporative losses can be reduced by decreasing the frequency of surface applied irrigation. Every time the surface is wetted, evaporation returns to first-stage levels. Applying the same irrigation amount, but in larger doses with greater time intervals between can reduce the time spent in first stage evaporation and thus reduce the cumulative evaporation. Even greater reductions in soil evaporation may be obtained by using subsurface irrigation instead of surface irrigation. A study in Texas found that up to 10% of the total seasonal water inputs might be saved due to reduced evaporation when using drip emitters at the 30-cm depth instead of surface drip emitters [7].

A variety of other methods for reducing evaporation have been tried, and some, like plastic mulches, have proven quite effective, while others have proven ill-conceived. Perhaps the most infamous method designed to reduce evaporation was the practice which some called “dust mulch”, a practice that contributed to one of the greatest natural disasters in the history of the United States.